A discussion of missing versus null annotations and how VOC XML and COCO JSON handle them.

Preparing data for computer vision models is a tedious task. Even assuming training images are appropriately representative for inference, managing annotations quickly becomes a challenge. In some annotation formats (PASCAL VOC XML, YOLO DarkNet) there is one annotation file per image. In others (COCO JSON, TensorFlow Object Detection CSV) there is a single annotation file that denotes bounding boxes for all images.

Thus, a particularly challenging problem can be determining images that are missing annotations – either accidentally or deliberately.

A missing annotation occurs when an image has objects that are not annotated when they should be. This is problematic as your model will be trained on false negatives of your objects.

A null annotation occurs when an image has no objects present, and thus, no bounding boxes need to be recorded. This is not necessarily problematic – in fact, it may be desired in order to train a model that objects are not always present in frame.

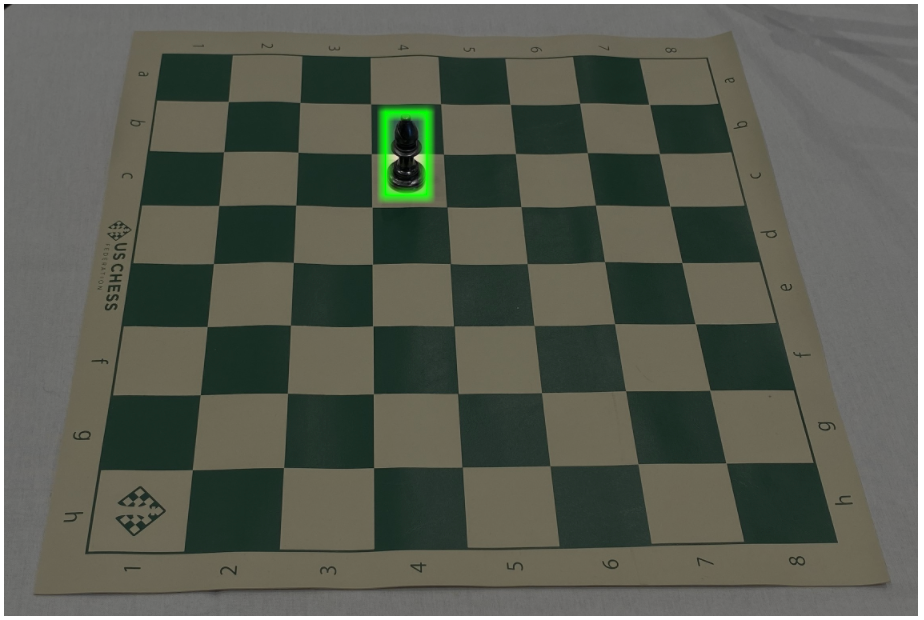

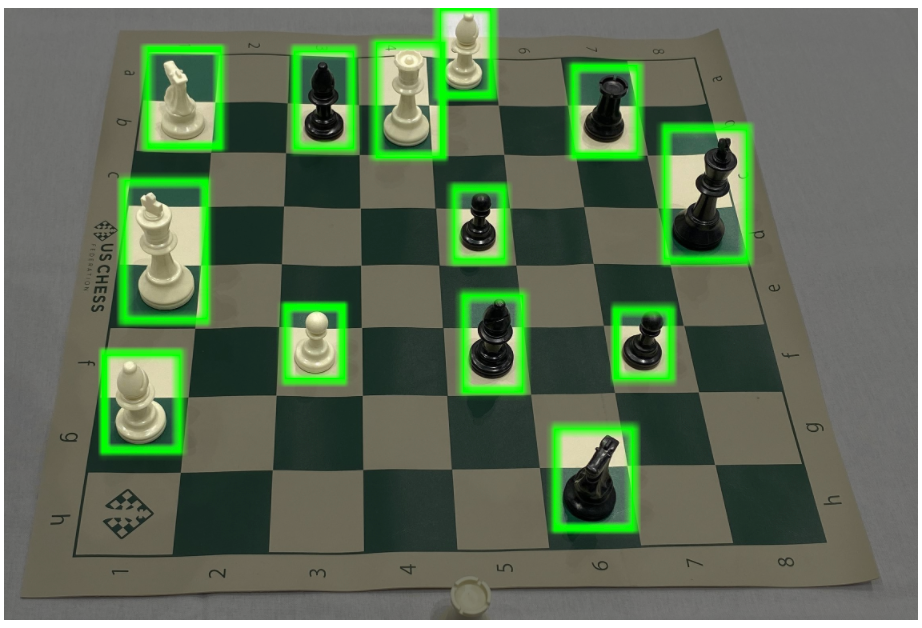

Let's consider an example in a chess dataset:

Above, see four chess images with various board states. The empty board state has no pieces annotated; we will see null annotation information about it below. The lighter board has no pieces annotated; it is missing annotations. The darker multi-piece board has 13 pieces annotations; it is the normal state we may expect. The last darker board has a single piece annotated; it is also the normal state.

Null Annotations in VOC XML and COCO JSON

The board with no pieces present does not need any bounding boxes. However, we may still want to include it in our dataset. In this case, we may want to train our model that a patterned chess board can exist, even if no pieces are present.

So, if we seek to include an image that does not have any bounding boxes in our dataset, how do we denote that in our annotations? It depends on the annotation format.

In PASCAL VOC XML, if we include both this image and annotation in our dataset, we may see the below annotation for this image:

<annotation>

<folder></folder>

<filename>e0d38d159ad3a801d0304d7e275812cc.jpg</filename>

<path>e0d38d159ad3a801d0304d7e275812cc.jpg</path>

<source>

<database>roboflow.ai</database>

</source>

<size>

<width>2284</width>

<height>1529</height>

<depth>3</depth>

</size>

<segmented>0</segmented>

</annotation>

The annotation contains the image name, its path, and the size of the image. Notably, this annotation does not contain any bounding box information. It is a null annotation.

This same annotation in COCO JSON, however, has the image noted in the images portion of the JSON, but has no data about that image in the annotations portion of the JSON. In this sense, COCO JSON is saying, "here's the images to include in the dataset," and, "Here are the bounding boxes for those images." If there's a named image but no bounding box, it can be inferred that there is a null example image.

The image is noted, but there will not be any annotations for image ID 0.

"images": [

{

"id": 0,

"license": 1,

"file_name": "e0d38d159ad3a801d0304d7e275812cc_jpg.rf.0cd06a940ccc9894109d83792535e3eb.jpg",

"height": 416,

"width": 416,

"date_captured": "2020-03-27T21:30:28+00:00"

},

Avoid Worrying About this Problem

Roboflow checks all images and their annotations at the time of upload and warns users if any annotations are missing.

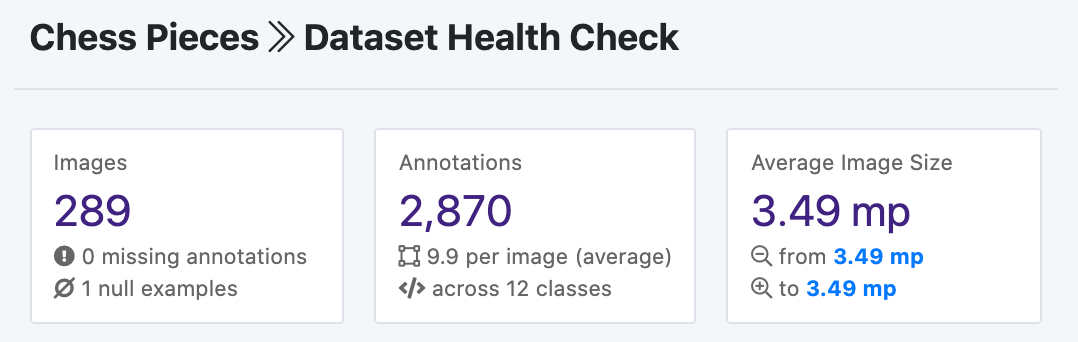

Upon upload, Roboflow runs a dataset health check on all images and annotations. One of the checks is for missing versus null annotations.

This is one of the many ways we catch silent errors that may reduce the performance of your computer vision models. Happy building!